CJR study shows AI search services misinform users and ignore publisher exclusion requests.

See full article...

See full article...

AI companies want to bypass copyright laws , but aren't willing to wait for laws to be updated... I am shocked.correctly identified all 10 excerpts from paywalled National Geographic content, despite National Geographic explicitly disallowing Perplexity’s web crawlers.

roughly 1 in 4 Americans now uses AI models as alternatives to traditional search engines

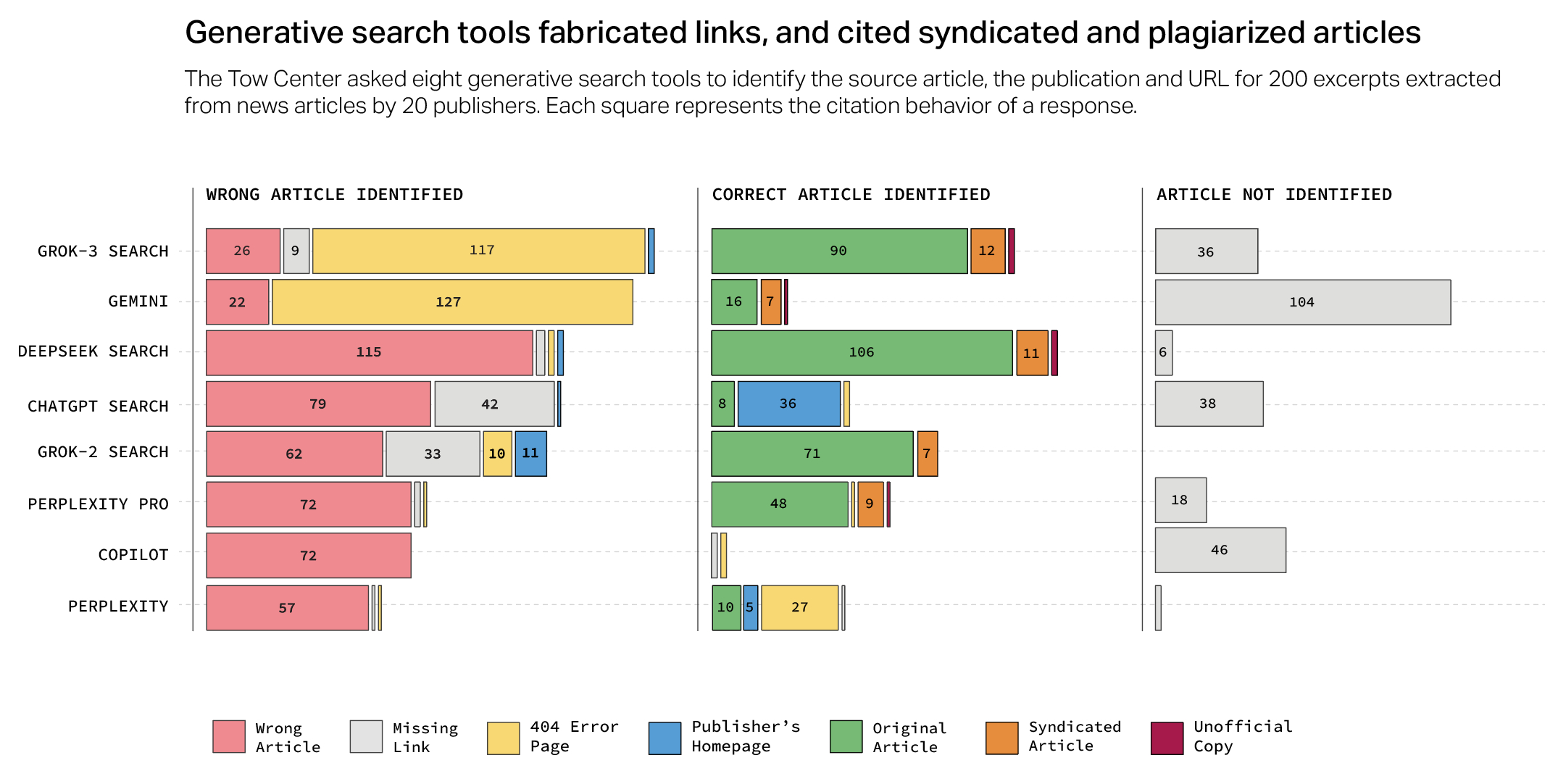

AI models incorrectly answered more than 60 percent of queries about news content.

Despite these issues, Howard sees room for improvement in future iterations, stating, "Today is the worst that the product will ever be," citing substantial investments and engineering efforts aimed at improving these tools.

But...but...but... President Musk said X is a much better source of news than anything else!If most of us actually cared about factual correctness, we wouldn't be in the situation we're in.

...and Grok 3's premium service ($40/month)...

C’mon you’re posting this in an article on accuracy? Please post any proof that Columbia expelled anyone for viewpoint. They have only expelled a handful of people, all of whom were involved in the Hamilton Hall takeover.Is this the same Columbia University that was more than willing to expel its students because they're not "American Enough" to pass Trump's American "purity" test?

What does a released study about AI error rates have anything to do with university administration being cowed into submission by a fascist president trying to recreate 1932 Germany.Is this the same Columbia University that was more than willing to expel its students because they're not "American Enough" to pass Trump's American "purity" test?

It sounds like the user expects a sincere answer, so I should make sure not to guess any details this time!

I think of dumb ai like malicious compliance from dumb people. The cheese will stick to a pizza if you add a 1/8 cup of glue; the request was fulfilled and the solution will work. I expect the same uselessness from a chatbot or from a stoned undergrad.All kidding aside, it doesn't take more than five minutes to figure out that these AI engines are often wrong....in the worst possible way. Subtly incorrect with an air of authority. Rarely entirely incorrect....so people give the benefit of the doubt. Sigh.

Are you saying no product in history ever went downhill?That could be said about literally any technology at any point in history.

Huh, so they ARE actually acting more like humans.rather than declining to respond when they lacked reliable information, the models frequently provided confabulations—plausible-sounding incorrect or speculative answers.

You're overlooking the fact that he could be wrong and it could get worst.That could be said about literally any technology at any point in history. It’s not even remotely an excuse. It’s semantically null.

He should be fired for saying something that stupid.

"Room for improvement" will be its epitaph.Despite these issues, Howard sees room for improvement in future iterations, stating, "Today is the worst that the product will ever be," citing substantial investments and engineering efforts aimed at improving these tools.

...but whatever the use case... it WAS wrong 60% of the time on a case.This doesn’t seem remotely informative? Traditional search is much better than GenAI for finding the origin of an exact piece of text. This seems like a study designed to find what it wants, that’s not even close to a real world use case.

Considering the enshittification epidemic going on I find it more likely that products are at their best at launch and will just get worse over time."Room for improvement" will be its epitaph.

And that whole "today is the worst that the product will ever be" ignores precedent and reality. No matter how bad something is today, it very much can be worse tomorrow.

Citation: the world today.

I scraped Elsevier's entire journal collection today. But I did it using a LLM, so that's perfectly okay, right?If AI companies are allowed to bypass copyright laws, so should I.

I’d say you could sue the manufacturer for not including the safe maximum does on the bottle! Usually I see stuff like “do not exceed 3 doses in 24 hours” on OTC stuff.Not really surprising. If you use Google more than once a day, you would know that. 30 minutes prior to this being posted I searched for something on my desktop (which is the only device I haven’t moved to DDG) and the “A.I.” changed 3 simple words that could have killed me if I hadn’t know. better.

I was looking up the max safe dose of a OTC sleeping pill in 24 hours. I needed to take another, the bottle mentioned nothing about a max dose or anything, I didn’t want to take too much, and definitely didn’t want to OD. I just wanted to go to sleep.

Had I followed Google’s advice, I would be hospitalized or worse right now. Thankfully I know enough to have caught it…this time.

Just 3 words in the AI summary could kill someone. Let that sink in, and they were small words. Unimportant words to many.

Click “web” or don’t use Google folks. Google launched my career decades ago when it became public. I was hugely successful because I knew how to use it. Now I am telling you: walk away.

EDIT: before someone asks, I may provide details later, but I'm baffled and honestly considering reaching out to my lawyer to see if maybe something can be done (probably not, but he likes challenges). Due to this, I won't give details (yet), but the tl;dr is: the LLM behind the Google AI stuff changed basically suggested that the max dose is the minimum if I had a huge issue falling asleep, and suggested another random value as the max that was 5X as much. None of the "sources" suggested anything like this, so it is unclear where Google got this information.

When I finally found a reputable page on the subject, the page noted that such a high dose can cause "respiratory depression, cardiac arrest, and death".

I don't rely on AI results in general because I know how they work, but had my spouse Googled that...or my kids, or anyone else...

Did you miss the news that Microsoft, Google, Perplexity, etc. are offering LLM search and average users are in fact using it?The average user is probably not using LLMs for this kind of thing, and we already have tools that do exact text matching well, and LLMs aren't one of them.

Arguably the bigger problem with AI search is the opposite: that it steals and scrapes data directly from websites, often verbatim, which both depreciates website traffic and runs into the problem of sharing information without appropriate context.

Ultimately I'm just not sure how much value this study actually has. It's like the strawberry or logic puzzle things. Yeah it's funny that LLMs are bad at these and we can make fun of how overhyped the products are, but it's also clearly outside the normal scope of us

I wonder whether they'll ever circle around to 'so bad it's good' like D-grade movies?Google AI results are worst than Google search… which are really bad. Double enshittifaction?

It’s true that it’s a little to the left. But not by much. An ordinary user might ask “what was that BBC article about the polar bears recently?” And expect to find an answer. In fact, I’ve had ChatGPT answer vague questions like that successfully sometimes. You would think if the tool is any good, if you’re more specific it would do a really good job of finding the article. In the study, though, rather than say “I don’t know” it makes URLs up sometimes.I said this in the other AI thread but I'm not a huge fan of this study.

Yes AI sucks, and yes AI is being way overmarketed, but this particular study seems both beyond the scope of how it's normally used and intentionally not really a good fit for an LLM in the first place.

The average user is probably not using LLMs for this kind of thing, and we already have tools that do exact text matching well, and LLMs aren't one of them.

Arguably the bigger problem with AI search is the opposite: that it steals and scrapes data directly from websites, often verbatim, which both depreciates website traffic and runs into the problem of sharing information without appropriate context.

Ultimately I'm just not sure how much value this study actually has. It's like the strawberry or logic puzzle things. Yeah it's funny that LLMs are bad at these and we can make fun of how overhyped the products are, but it's also clearly outside the normal scope of use.

In a way it almost feels like bait, and a potential distraction from the more serious issues surrounding LLMs.

It argued the AI as a search engine is worse than modern plain Googling. Given more people and companies are using AI instead of vanilla algos, I can see that as a cromulent warning.We deliberately chose excerpts that, if pasted into a traditional Google search, returned the original source within the first three results.

That could be said about literally any technology at any point in history. It’s not even remotely an excuse. It’s semantically null.

He should be fired for saying something that stupid.